UX Researcher & Developer

·

September - December 2024

Internationally educated nurses (IENs) bring strong clinical skills to Canadian healthcare. However, hiring processes evaluate communication, cultural fit, and situational reasoning as much as clinical expertise. For IENs, that gap between what they know and how they're assessed is where opportunities are lost.

Onward is an AI-supported interview preparation web app that delivers structured practice, real-time transcription, and role-aligned feedback tailored specifically to the IEN experience. It was built for the BCIT Digital Design & Development Year 2 showcase exploring AI for underrepresented communities.

As UX Researcher and Developer, the work spanned both ends of the process, from defining the problem through primary research to building the AI pipeline that powers the final product

The team explored how AI could support newcomers to Canada across areas such as services, housing, and employment. Employment quickly emerged as a critical milestone for financial stability and social integration.

Within healthcare, immigrants make up 25% of the Canadian nursing workforce, yet nearly 50% of IENs are overqualified for their current roles. Employment barriers aren't about clinical competence. They're about the gap between what these nurses know and what Canadian hiring processes are designed to evaluate.

Healthcare interviews evaluate communication, situational reasoning, and alignment with workplace norms, not just clinical expertise. For internationally educated nurses, that distinction matters. Translating prior experience into culturally aligned responses introduces friction that generic preparation tools aren't built to address.

Research identified four interconnected barriers shaping the IEN experience:

Many IENs face long and costly licensing processes, often requiring extra exams, bridging programs, and courses. These hurdles delay re-entry into their profession and contribute to underemployment.

Canadian healthcare interviews emphasize soft skills, cultural competency, and local practices. IENs often struggle with unfamiliar formats, terminology, and ways of presenting experience.

Interview anxiety is heightened by unfamiliar hiring practices and limited culturally relevant preparation. Since the STAR method is often new to IENs, they struggle to communicate skills confidently without tailored feedback.

Programs such as the Foreign Credential Recognition Program address licensing challenges, but few initiatives focus on interview preparation and confidence building. This gap became the focus of our project.

How might we help internationally educated nurses translate their clinical experience into responses that resonate within Canadian healthcare hiring contexts?

Primary surveys and secondary research identified preparation patterns among healthcare professionals, with additional barriers more pronounced for internationally educated nurses.

Existing tools such as Yoodli, Google Interview Warmup, and PrepMeUp focused on generic interview prep, lacking healthcare-specific context and real-time feedback.

These gaps confirmed that the solution needed to be genuinely healthcare-specific and context-aware, not a generic interview tool with surface-level customization.

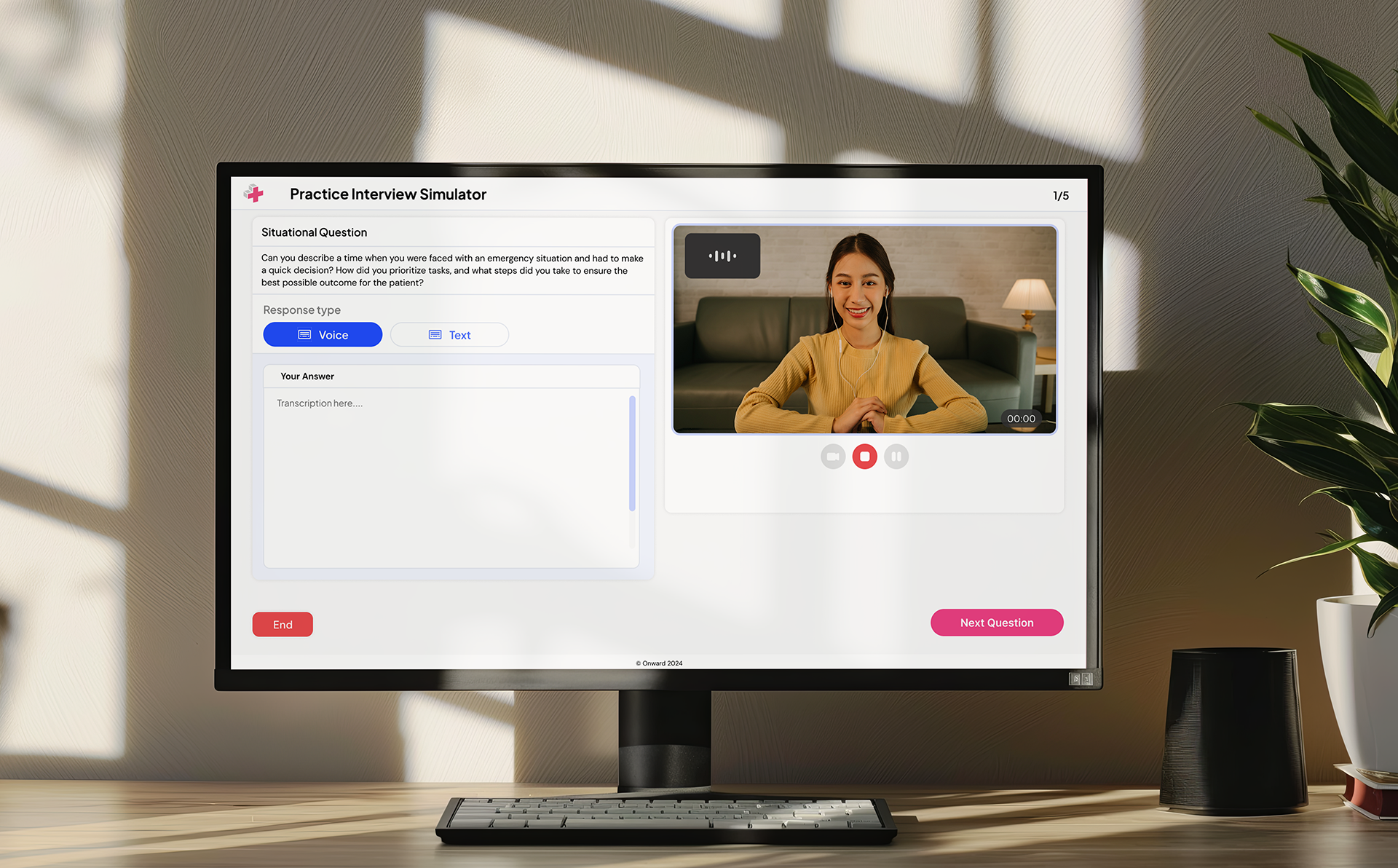

Onward is an AI-supported interview preparation web app built specifically for internationally educated nurses. Unlike generic tools, it combines structured practice, real-time transcription, and role-aligned feedback within a healthcare hiring context.

The platform centers on three integrated capabilities:

Users upload a resume and job posting to generate context-specific interview questions aligned with healthcare hiring expectations.

Azure Speech captures responses during mock interviews, surfacing filler words, pacing, and clarity gaps.

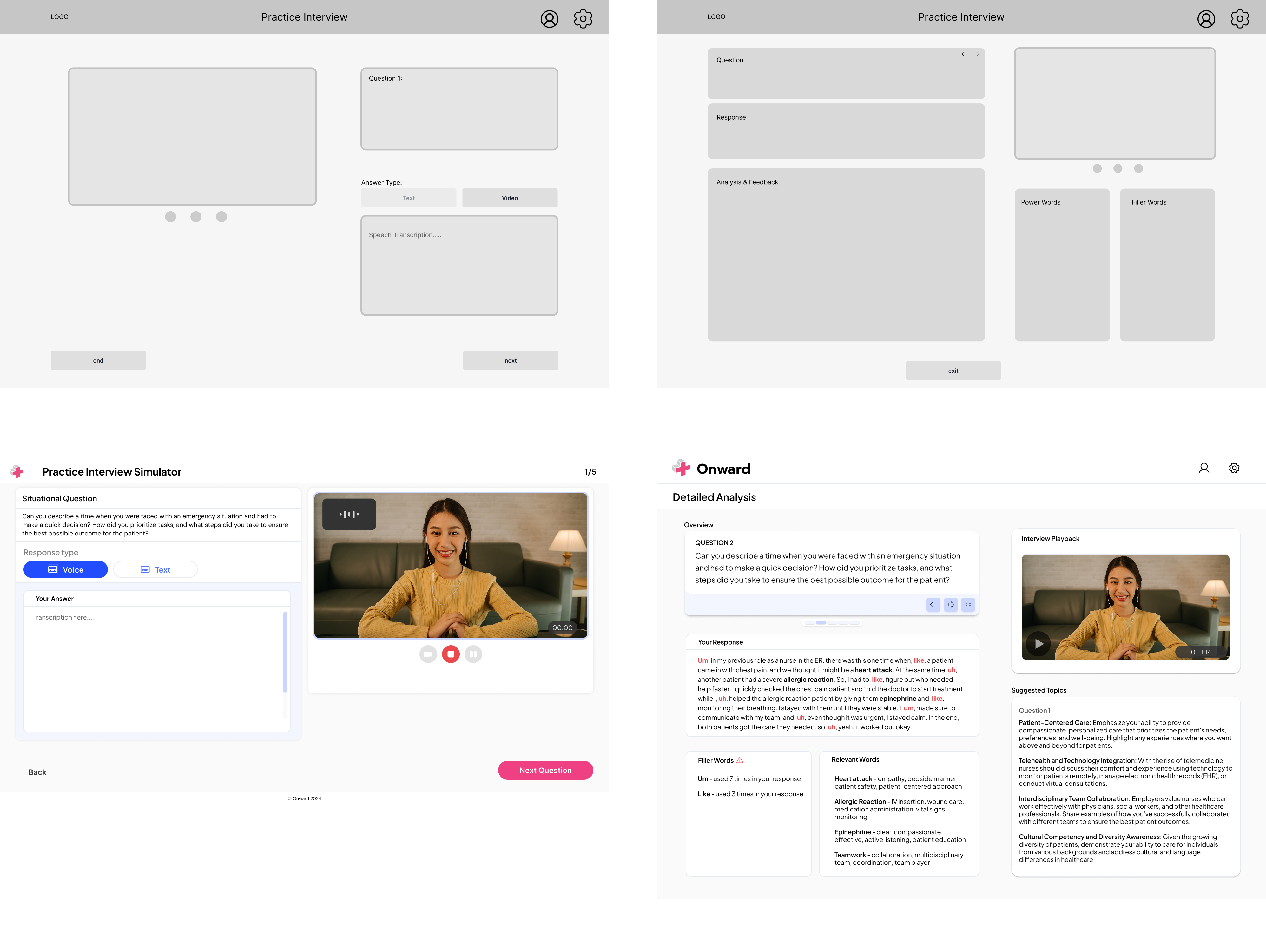

AI analyzes responses against resume and job context, returning categorized insights aligned with interview expectations.

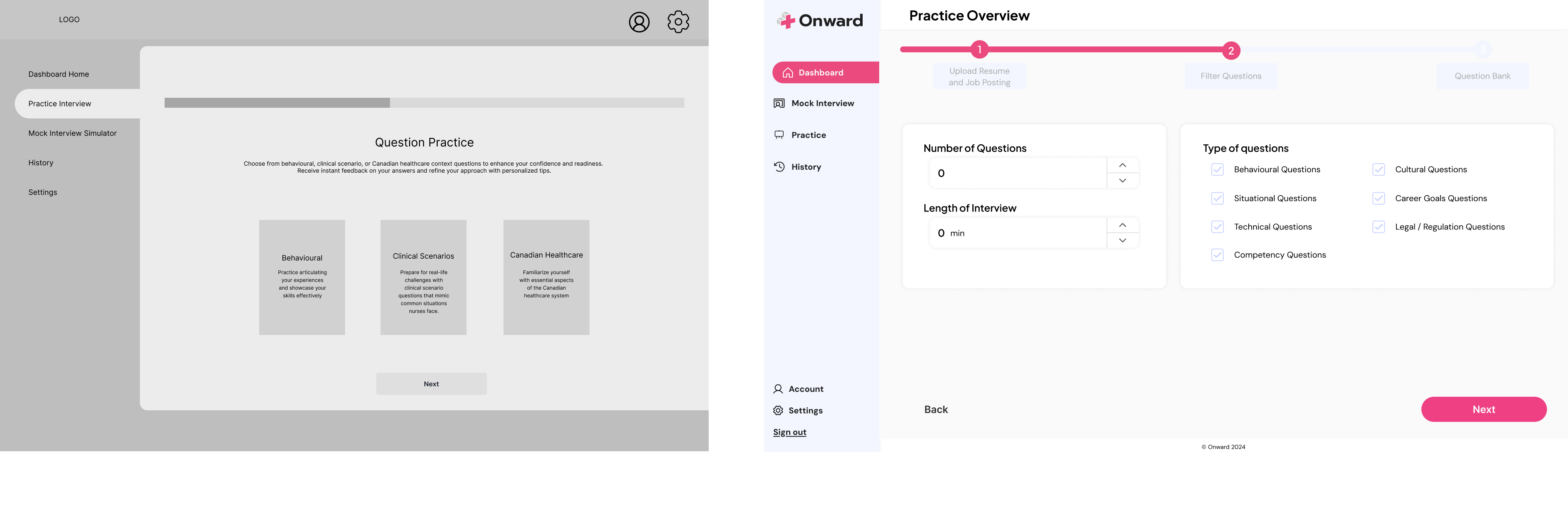

Task-based testing with program instructors and peers revealed that users struggled with decision points before they even started practicing. Terminology like 'Mock Interview' and 'Practice Interview' caused hesitation, button labels were unclear, and users didn't understand why video was being recorded. The design process focused on resolving that friction directly.

Renamed “Practice Interview” to “Practice” to reduce redundancy and simplify language within the flow.

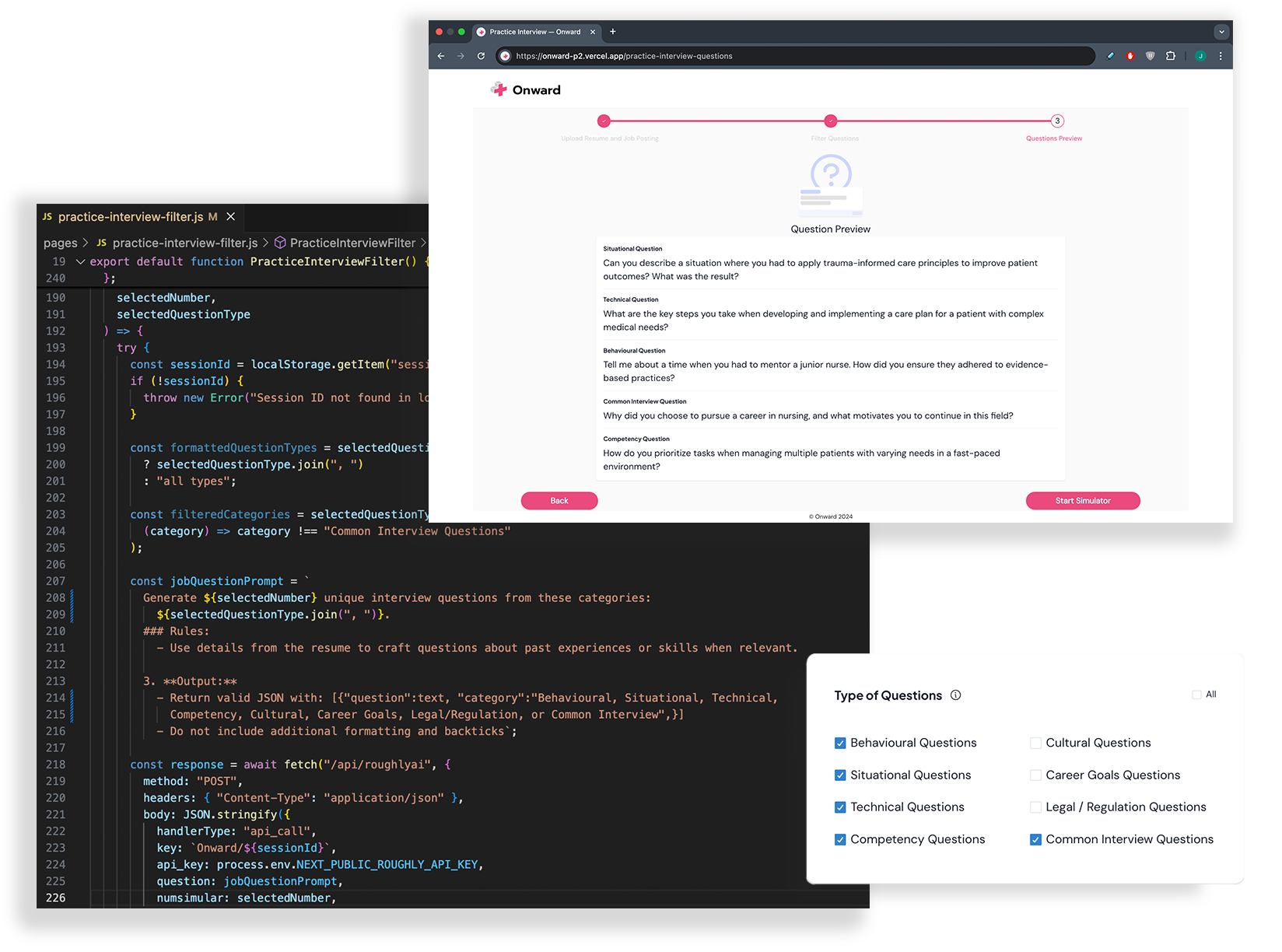

Introduced configurable controls for question count, interview length, and categories, along with a step-based progress indicator. This clarified expectations before entering the timed experience.

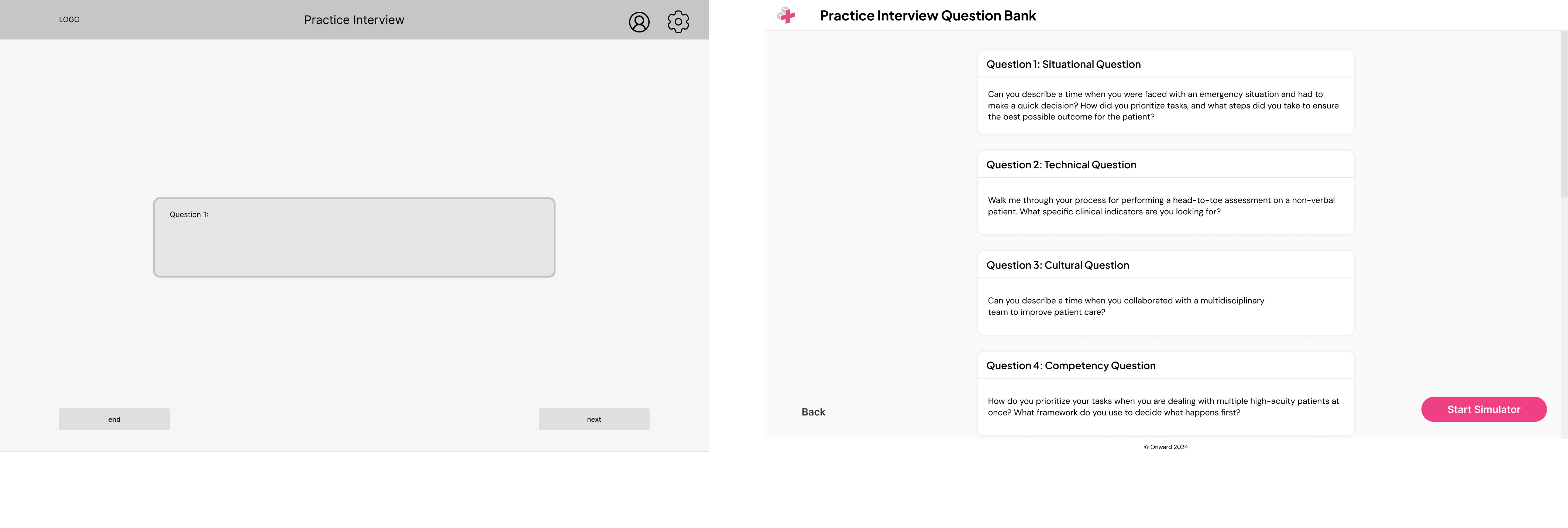

The initial flow previewed questions one at a time before answering, creating repeated interruptions.

The revised model introduced a consolidated question overview, allowing users to prepare in advance and complete the practice session without stopping between prompts.

The answering interface remained largely consistent, with minor layout refinements for clarity.

The feedback screen was restructured to align with AI-generated output, ensuring structured insights could be presented clearly within technical constraints.

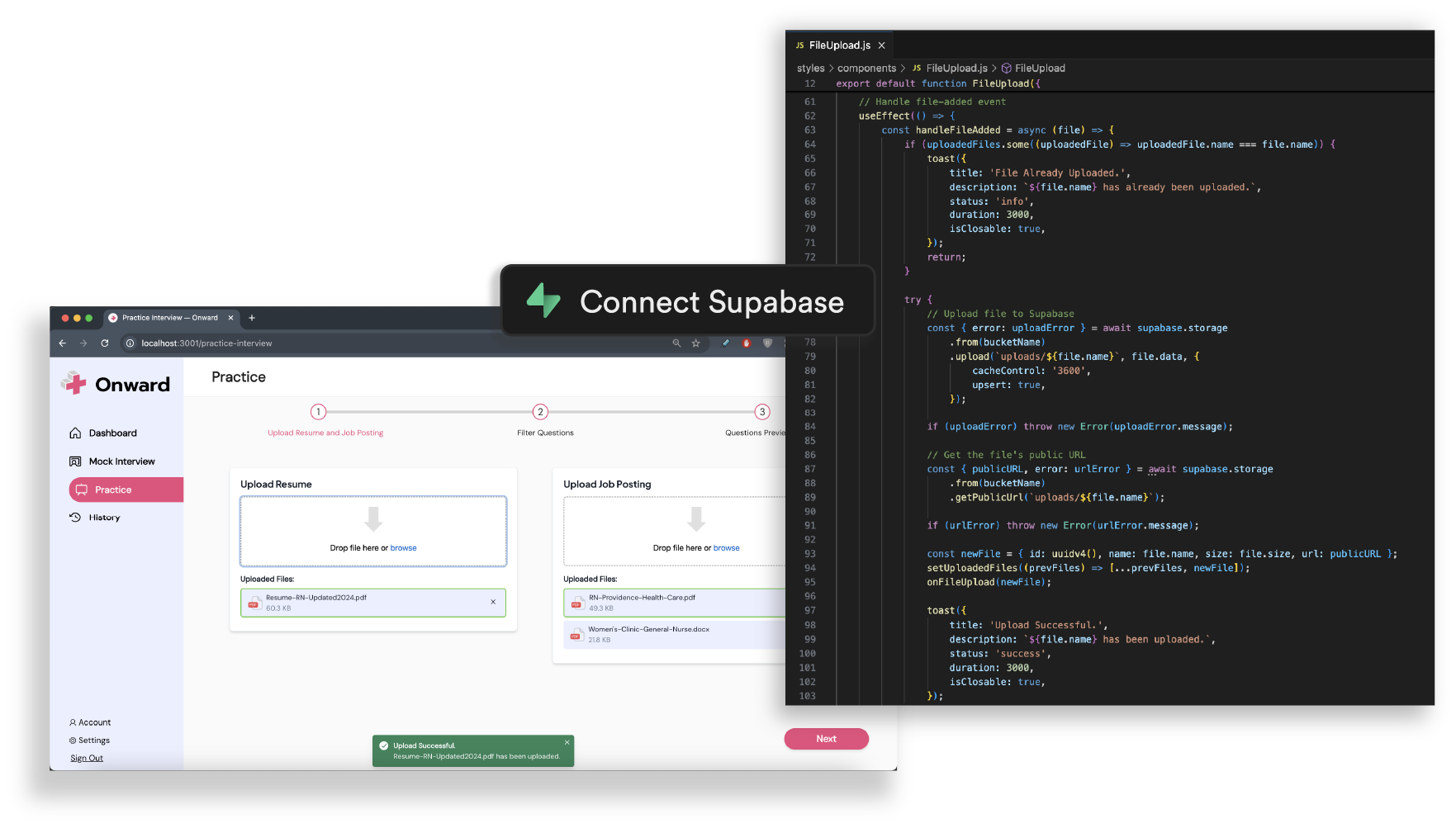

The MVP was built with Next.js and React on the frontend, with Supabase managing authentication and storage. Development focused on implementing the core practice and feedback pipeline, from file upload to AI analysis.

Personalizing questions to each user's actual role and experience was central to the product's value. Drag-and-drop uploads via Uppy stored files securely in Supabase Storage, with public URLs passed directly into the AI pipeline for question generation.

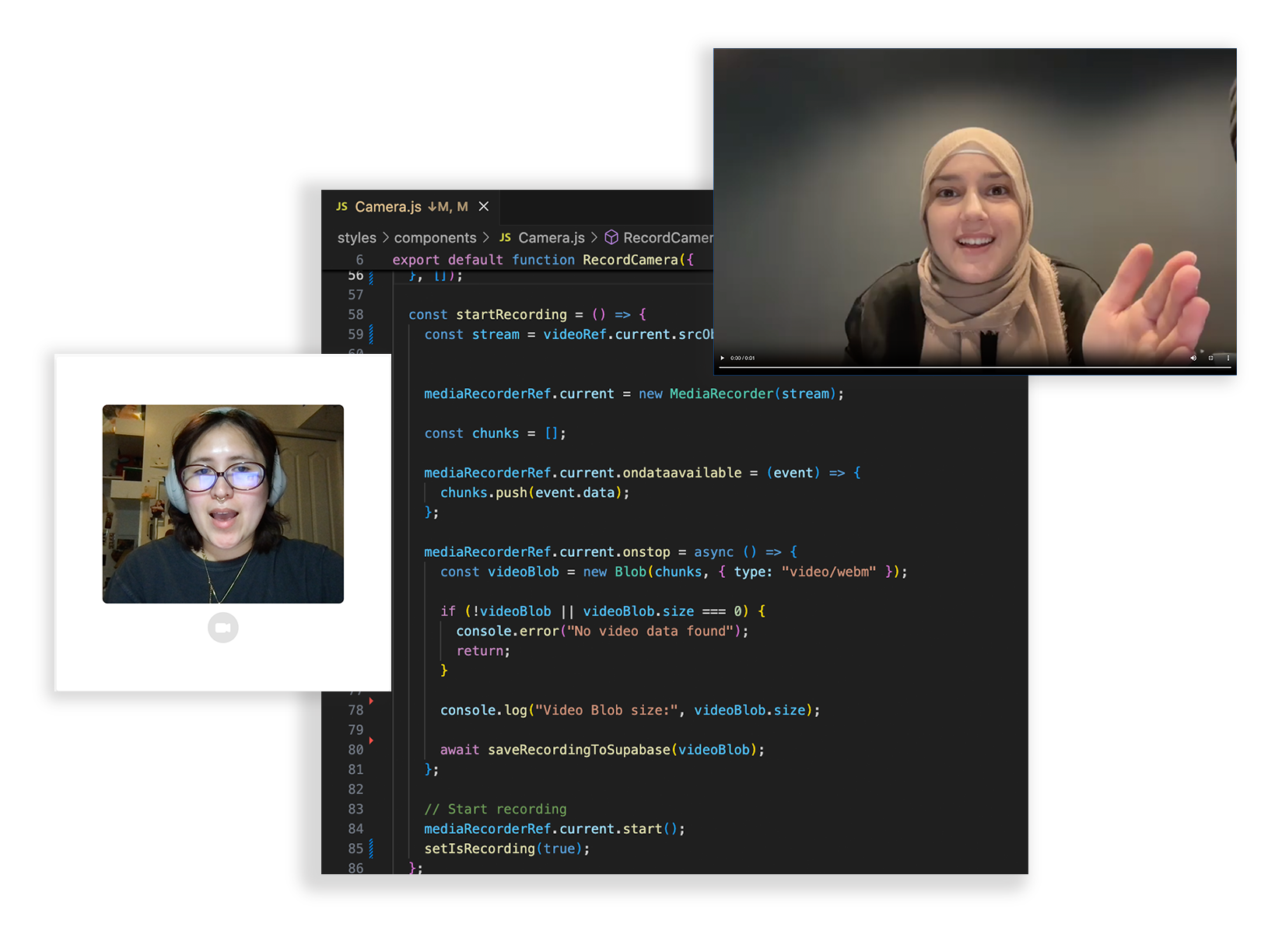

Self-review of non-verbal communication was a key user need that generic tools don't address. In-browser recording using the MediaRecorder API captured video responses, stored them in Supabase, and made them available for post-session playback.

The feedback needed to feel role-specific, not generic. Uploaded resumes and job postings were analyzed via RoughlyAI, returning structured JSON with tailored interview questions dynamically rendered based on selected categories.

Wording guides user choices.

Terms like "Practice Interview" alongside "Mock Interview" caused hesitation. Renaming to "Practice" gave users a clearer sense of progression and made their choices more confident.

Design shifts in implementation. Working as both researcher and developer meant experiencing edge cases firsthand, like how an upload flow handles errors, that weren't visible in Figma. It sharpened how I think about designing with implementation in mind.

Clarity and context are accessibility.

For IENs, preparation was already high stress. Unclear instructions or inconsistent labels raised the barrier further, making low cognitive load design less of a checklist item and more of a cultural accessibility issue.